Cleaning up Twitter data in Excel for analysis

A lot of academic work that draws on tweets as primary data will use hashtag archives as the basis of their study. What’s nice about that is that you can use tools that capture data and present them to you in a usable manner (e.g. a CSV file). If you’re doing something a little different, like revie

A lot of academic work that draws on tweets as primary data will use hashtag archives as the basis of their study. What’s nice about that is that you can use tools that capture data and present them to you in a usable manner (e.g. a CSV file). If you’re doing something a little different, like reviewing tweets from a group of individuals, that’s a little harder.I’ve been working with my BCU colleague Inger-Lise Bore on some research into fan fiction written on Twitter (it started with this blog post – we’re presenting it at MeCCSA 2011 tomorrow). There’s no hashtag used to label the tweets we want to study – we were looking instead at the entire output from a few dozen tweets. We found a few web services that promised ways of capturing and archiving this type of Twitter data for us, but they didn’t work. At all. So instead we had to use some pretty unsophisticated means to grab the data.

Grab the data

I simply copied and pasted the tweets of all characters I was looking at directly from a Twitter list (http://twitter.com/joshualyman/colleagues). This approach allowed me to take the latest tweet at the point of collection and all previous tweets that were still accessible to Twitter’s public timeline and search tools. In the case of this list, that gave us a sample across a number of days.In this example I’m trying to track un tagged tweets from several different accounts, which are all linked by being part of a list. I need to go into the list at twitter.com and then keep hitting the “more” button to get more and more tweets. I can only grab up to the limit of what Twitter keeps available, in this case that went back over 5 days and offered up about 797 tweets.Data was captured as a Safari Web Archive file, which preserves hyperlinks and allows us to see relations between tweets via the “in reply to” metadata / hyperlink. It was also captured as a PDF file. The HTML content was also copied and pasted into Excel, where it needed to be reformatted into a useful data structure for later coding.

Tidy the data

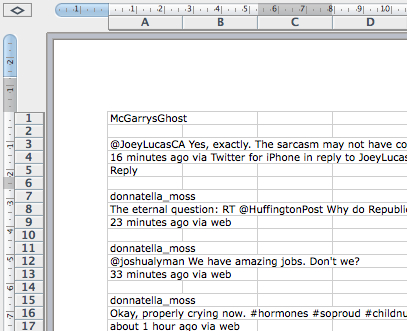

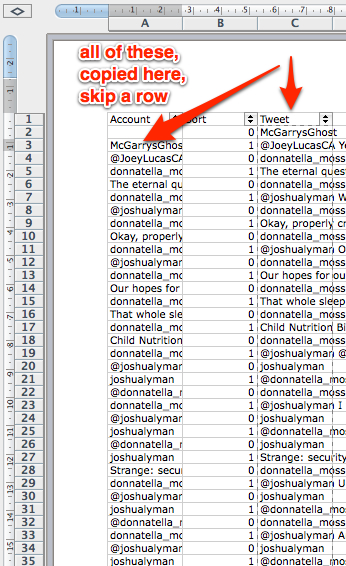

The initial spreadsheet looks like this.

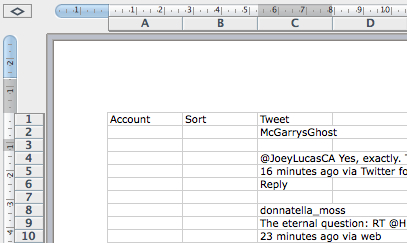

There’s a few simple tricks in Excel to help me reformat this ready for coding. Before you do anything save a backup of the raw data in case something goes wrong.Start by inserting two extra columns into your document (Col A and B, meaning your data is in Col C). Add an extra row that you can use for headings: your data should now start in C2.

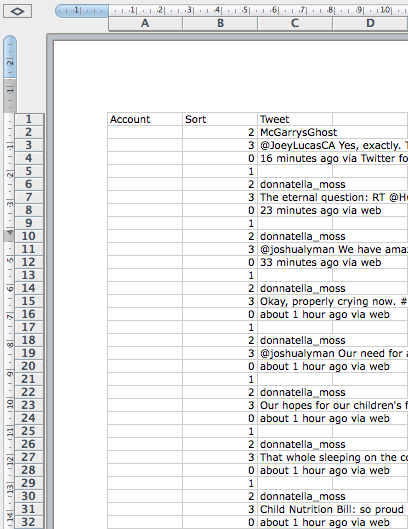

The first tweet may be formatted differently than the others, so tidy it up so it matches the pattern (I had to delete rows 3 & 6 to achieve that, YMMV).Now in cell B2 add this formula =MOD(ROW(),4) [for excel] or =MOD(ROW();4) [for google docs, numbers, open office etc.] and then fill this down to the bottom [method adapted from http://www.wikihow.com/Select-Alternate-Rows-on-a-Spreadsheet]. You might find you have some other data about trending topics etc. at the bottom of the spreadsheet – you can delete those.What you should now have is every line of the spreadsheet numbered from 0-3 (if your data is formatted differently, adjust the formula as appropriate – =MOD(ROW(),4) is for 4 lines that you need to code, =MOD(ROW(),3) would be for 3 etc.)

With that done you can now add a filter to each column, and then use that to edit the data. In my example data I don’t need the line that timestamps the tweet e.g. “about 1 hour ago via web” so I can remove these by filtering to show all fields labelled “0” and then deleting the row. Changing the filter back to “show all” and you’ll notice that the sort numbers are all out of whack – that’s because we now have a loop of three lines, but we’re numbering for 4. So I adjust the formula in B2 to =MOD(ROW(),3) and fill down – now all is fine again.

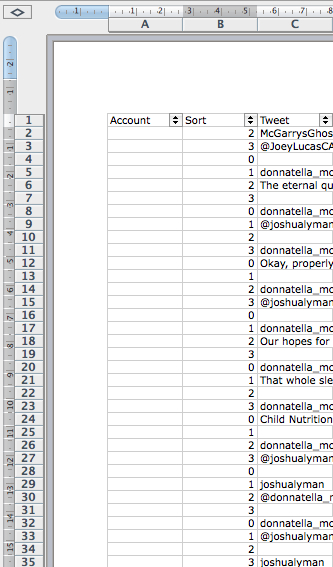

I then repeated the process of filter, delete, reset sort numbers to get rid of the blank lines after every tweet.The final step, I need to shift the account names (labelled 0 in my example data’s sort column at this point) into column A and match them with their tweets (so, that’s down 1 row and into Column A). That’s a simple copy and paste of all of Column C onto Column A starting from A3.

Finally, I can delete all the rows that just hold account names (Sort for code 0) and I’ve got my data ready for coding.It’s a pretty simple process – but there may be an easier way. Let me know in the comments if you have a better way.